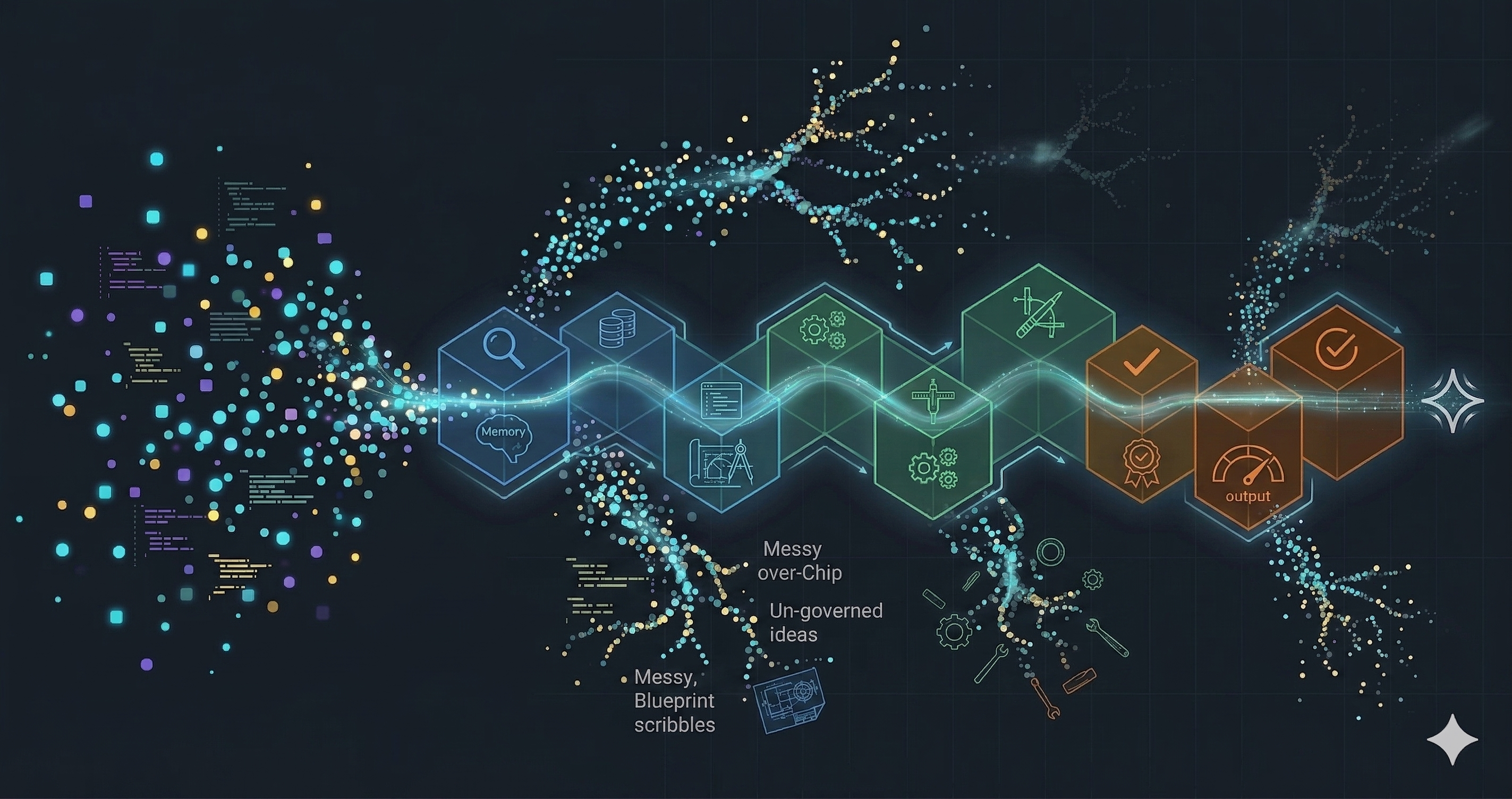

How We Handle LLM Drift: A Ten-Phase Task Lifecycle

The task lifecycle we run at Imagineers to capture chain of thought and beat session compaction on long-running agent tasks.

Better prompts plateau. Context engineering breaks through by assembling the right information into every LLM test generation call.

Running Playwright tests with an LLM gives you per-run analytics. But how do you track patterns across runs and build a full test analytics platform with LLMs?

16 test failures. 2 root causes. $0.05. Your CI already knows what broke. This workflow makes it tell you.

Structured ARIA labels and component docs give AI coding agents everything they need to generate deterministic Playwright tests -- no vision models required.

Why code generation beats visual agents for scalable test automation, and how to strategically use computer vision for edge cases where the DOM is inaccessible.

A 10-part series translating Google’s Context Engineering whitepaper into hands-on QA practice. Each post builds a working tool.

Frontier models ship faster than teams can adapt. This series is how we keep up—and help you do the same.

Foundations

Posts 1–3 · What is Context Engineering and why should QA teams care?

Building QA Memory Systems

Posts 4–7 · Hands-on builds: from test failure memory to self-healing tests

Scaling & Operating

Posts 8–10 · Making it production-ready: multi-agent, monitoring, massive scale

Work with us

Short engagements to upgrade test automation with LLMs.

Start a conversation →Krishnanand B

Founding Imagineer

11+ years building test automation systems. From Selenium scripts to AI-native platforms.

Work with us →